Hsuan-I Ho passed the doctoral exam. Congratulations!

Research Overview

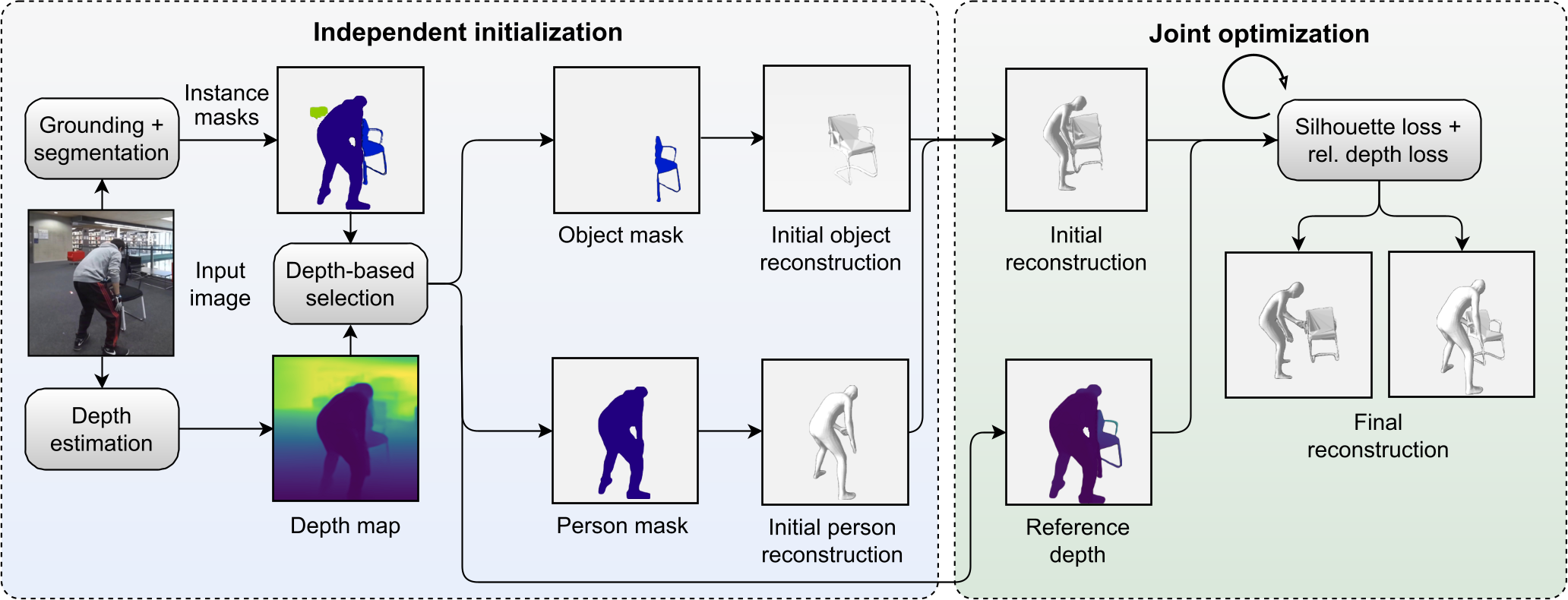

The AIT lab conducts research at the fore-front of human-centric computer vision. Our core research interests are algorithms and methods for the spatio-temporal understanding of how humans move within and interact with the physical world. We develop learning-based algorithms, methods and representations for human- and interaction-centric understanding of our world from videos, images and other sensor data. Application domains of interest include Augmented and Virtual Reality, Human Robot Interaction and more. Please refer to our publications for more information.

The AIT Lab, led by Prof. Dr. Otmar Hilliges, is part of the Institute for Intelligent Interactive Systems (IIS), in the Department of Computer Science at ETH Zurich.

Latest News

-

-

Zicong Fan passed the doctoral exam. Congratulations!

-

Marcel C. Buehler passed the doctoral exam. Congratulations!

-

Chen Guo passed the doctoral exam. Congratulations!

-

Yufeng Zheng passed the doctoral exam. Congratulations!