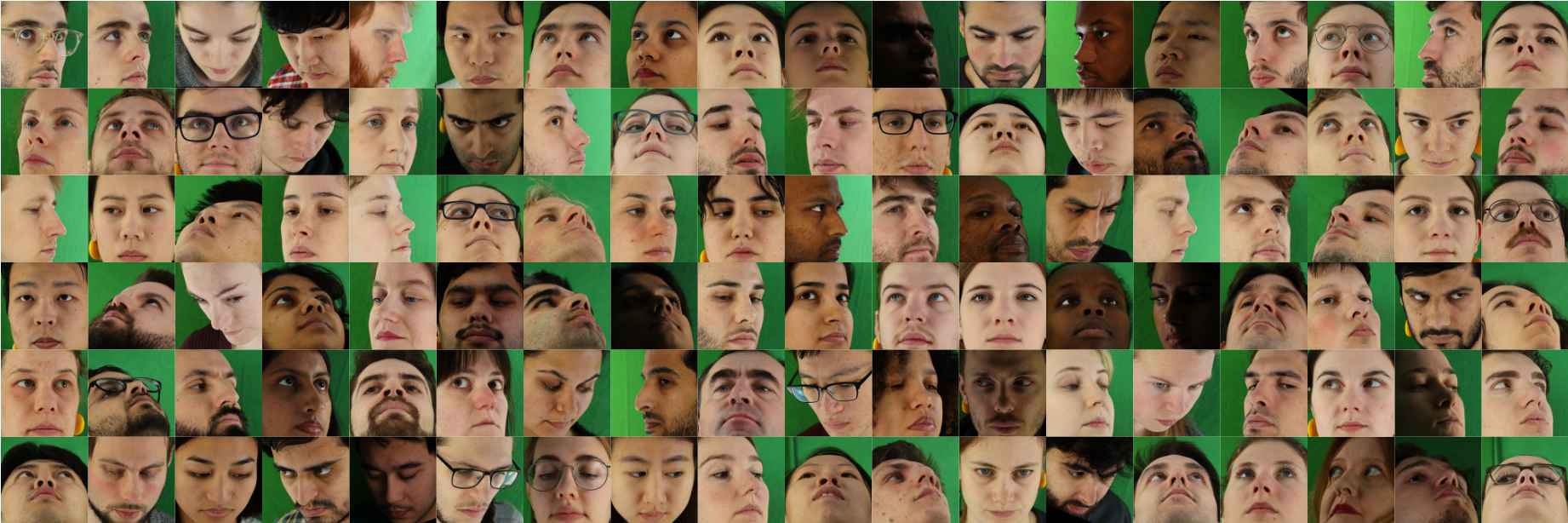

We propose the ETH-XGaze dataset: a large scale (over 1 million samples) gaze estimation dataset with high-resolution images under extreme head poses and gaze directions.

Abstract

Gaze estimation is a fundamental task in many applications of computer vision, human computer interaction and robotics. Many state-of-the-art methods are trained and tested on custom datasets, making comparison across methods challenging. Furthermore, existing gaze estimation datasets have limited head pose and gaze variations, and the evaluations are conducted using different protocols and metrics. In this paper, we propose a new gaze estimation dataset called ETH-XGaze, consisting of over one million high-resolution images of varying gaze under extreme head poses. We collect this dataset from 110 participants with a custom hardware setup including 18 digital SLR cameras and adjustable illumination conditions, and a calibrated system to record ground truth gaze targets. We show that our dataset can significantly improve the robustness of gaze estimation methods across different head poses and gaze angles. Additionally, we define a standardized experimental protocol and evaluation metric on ETH-XGaze, to better unify gaze estimation research going forward.

Preview Video

Data Collection Demonstration

Acknowledgments

We thank the participants of our dataset for their contributions, our reviewers for helping us improve the paper, and Jan Wezel for helping with the hardware setup. This project has received funding from the European Research Council (ERC) under the European Union’s Horizon 2020 research and innovation programme grant agreement No. StG-2016-717054.

License

This Dataset is under CC BY-NC-SA 4.0 license with additional conditions and terms. Please refer to the completed license file.

Downloads

- Dataset (face patch images with size of 224*224 pixels about 130 GB, face patch images with size of 448*448 pixels about 497 GB, and the full raw images about 7 TB). The ETH-XGaze Dataset is available on request. Please register here to gain access to the dataset. </a>

- Leaderboard. Please read the instruction first on the leaderboard page for how to test your result.