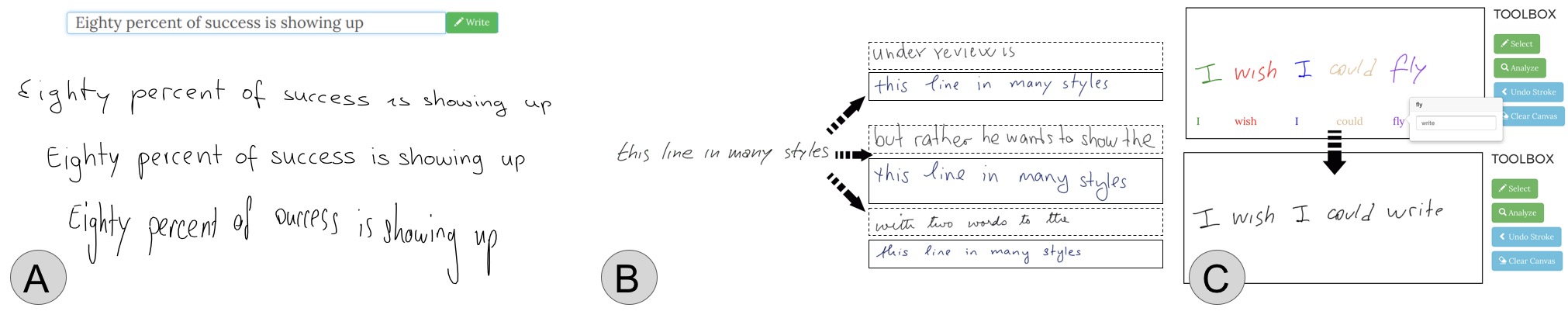

We propose a novel generative neural network architecture that is capable of disentangling style from content and thus makes digital ink editable. Our model can synthesize handwriting from typed text while giving users control over the visual appearance (A), transfer style across handwriting samples (B, solid line box synthesized stroke, dotted line box reference style), and even edit handwritten samples at the word level (C).

Abstract

Digital ink promises to combine the flexibility and aesthetics of handwriting and the ability to process, search and edit digital text. Character recognition converts handwritten text into a digital representation, albeit at the cost of losing personalized appearance due to the technical difficulties of separating the interwoven components of content and style. In this paper, we propose a novel generative neural network architecture that is capable of disentangling style from content and thus making digital ink editable. Our model can synthesize arbitrary text, while giving users control over the visual appearance (style). For example, allowing for style transfer without changing the content, editing of digital ink at the word level and other application scenarios such as spell-checking and correction of handwritten text. We furthermore contribute a new dataset of handwritten text with fine-grained annotations at the character level and report results from an initial user evaluation.

Video

Dataset

We collected data from 94 authors by using IAMOnDB corpus. After discarding noisy samples of IAMOnDB, we compiled a dataset of 294 authors, fully segmented. You can find the link to our training and validation splits below. If you use our data, we kindly ask you to cite our work, and also fill IAMOnDB's registration form and follow their citation requirements since our dataset extends IAMOnDB.

License

This Dataset is under CC BY-NC-SA 4.0 license with additional conditions and terms. Please refer to the completed license file.