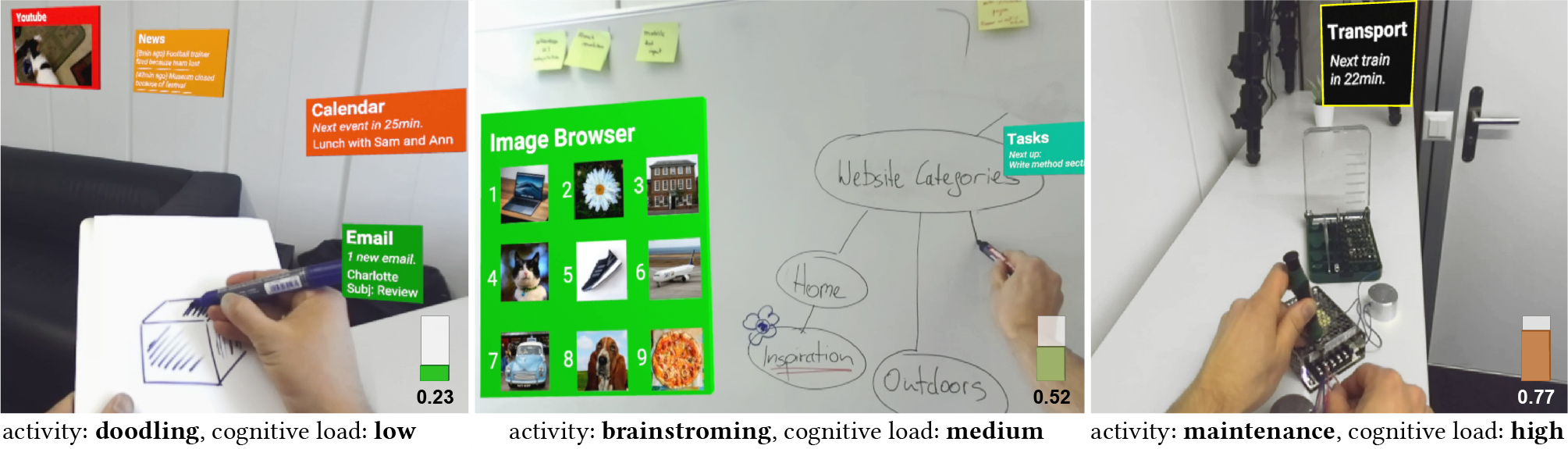

We propose an approach to automatically adapt Mixed Reality interfaces to the current context, for example, cognitive load, task and environment. We leverage combinatorial optimization to decide when, where and how to display virtual elements. For tasks with low cognitive load, our system displays more elements and in more detail (left}). Increased cognitive load leads to a minimal UI with fewer elements at lower levels of detail.

abstract

We present an optimization-based approach for Mixed Reality (MR) systems to automatically control when and where applications are shown, and how much information they display. Currently, content creators design applications, and users then manually adjust which applications are visible and how much information they show. This choice has to be adjusted every time users switch context, i.e., whenever they switch their task or environment. Since context switches happen many times a day, we believe that MR interfaces require automation to alleviate this problem. We propose a real-time approach to automate this process based on users' current cognitive load and knowledge about their task and environment. Our system adapts which applications are displayed, how much information they show, and where they are placed. We formulate this problem as a mix of rule-based decision making and combinatorial optimization which can be solved efficiently in real-time. We present a set of proof-of-concept applications showing that our approach is applicable in a wide range of scenarios. Finally, we show in a dual-task evaluation that our approach decreased secondary tasks interactions by 36%.

Accompanying Videos

Teaser video (30 sec):

Full video (5 min):

Acknowledgments

We would like to thank Alexandra Ion for her help and feedback, and Seonwook Park for his help with the video. This work was supported in part by the ETH Zurich Postdoctoral Fellowship Programme (ETH/Cofund 18-1 FEL-39) and the ERC Grant OPTINT (StG-2016-717054).